Interactive Decision Making

In computer science, and machine learning in particular, the primary purpose of many systems is to help humans make decisions. Simultaneously, many of these systems also stand to benefit from having a human in the loop, whether it is to reinforce good decisions, warn against bad decisions, or simply to provide expert advice in areas of uncertainty.

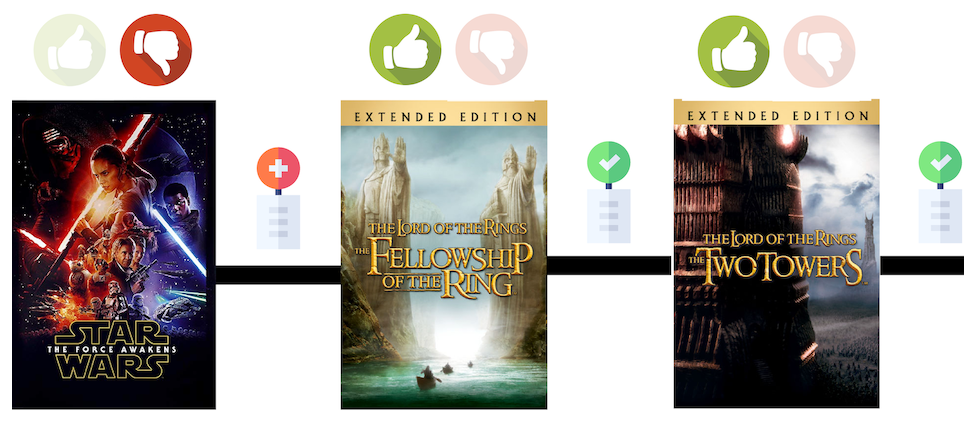

A simple, concrete example can be seen in recommender systems. Whether it is through explicit feedback (such as rating a movie on Netflix) or implicit feedback (such as clicking/not clicking on an advertisement), the vast majority of successful, real-world recommender systems are constantly interacting with and adapting to each user.

One important research direction for our group is to mathematically formalize this idea of adaptivity and develop algorithms for interactive decision making that only boast strong theoretical guarantees, but also perform well in practice.

-

COLT 2021 Adaptivity in Adaptive Submodularity Hossein Esfandiari, Amin Karbasi, Vahab S. Mirrokni -

NeurIPS 2019 Adaptive Sequence Submodularity Marko Mitrovic, Ehsan Kazemi, Moran Feldman, Andreas Krause, Amin Karbasi -

AISTATS 2018 Comparison Based Learning from Weak Oracles Ehsan Kazemi, Lin Chen, Sanjoy Dasgupta, Amin Karbasi -

AISTATS 2014 Near Optimal Bayesian Active Learning for Decision Making Shervin Javdani, Yuxin Chen, Amin Karbasi, Andreas Krause, Drew Bagnell, Siddhartha S. Srinivasa: -

ICML 2014 Near-Optimally Teaching the Crowd to Classify. Adish Singla, Ilija Bogunovic, Gábor Bartók, Amin Karbasi, Andreas Krause -

STACS 2013 Constrained Binary Identification Problem Amin Karbasi, Morteza Zadimoghaddam -

ICML 2012 Comparison-Based Learning with Rank Nets Amin Karbasi, Stratis Ioannidis, Laurent Massoulié: -

ITA 2012 Hot or not: Interactive content search using comparisons Amin Karbasi, Stratis Ioannidis, Laurent Massoulié -

ITW 2012 Sequential group testing with graph constraints Amin Karbasi, Morteza Zadimoghaddam